The OpenAI Keynote

By Ben Thompson at Stratechery

In 2013, when I started Stratechery, there was no bigger event than the launch of the new iPhone; its only rival was Google I/O, which is when the newest version of Android was unveiled (hardware always breaks the tie, including with Apple’s iOS introductions at WWDC). It wasn’t just that smartphones were relatively new and still adding critical features, but that the strategic decisions and ultimate fates of the platforms were still an open question. More than that, the entire future of the tech industry was clearly tied up in said platforms and their corresponding operating systems and devices; how could keynotes not be a big deal?

Fast forward a decade and the tech keynote has diminished in importance and, in the case of Apple, disappeared completely, replaced by a pre-recorded marketing video. I want to be mad about it, but it makes sense: an iPhone introduction has been diminished not by Apple’s presentation, but rather Apple’s presentations reflect the reality that the most important questions around an iPhone are about marketing tactics. How do you segment the iPhone line? How do you price? What sort of brand affinity are you seeking to build? There, I just summarized the iPhone 15 introduction, and the reality that the smartphone era — The End of the Beginning — is over as far as strategic considerations are concerned. iOS and Android are a given, but what is next and yet unknown?

The answer is, clearly, AI, but even there, the energy seems muted: Apple hasn’t talked about generative AI other than to assure investors on earnings calls that they are working on it; Google I/O was of course about AI, but mostly in the context of Google’s own products — few of which have actually shipped — and my Article at the time was quickly side-tracked into philosophical discussions about both the nature of AI innovation (sustaining versus disruptive), the question of tech revolution versus alignment, and a preview of the coming battles of regulation that arrived with last week’s Executive Order on AI.

Meta’s Connect keynote was much more interesting: not only were AI characters being added to Meta’s social networks, but next year you will be able to take AI with you via Smart Glasses (I told you hardware was interesting!). Nothing, though, seemed to match the energy around yesterday’s OpenAI developer conference, their first ever: there is nothing more interesting in tech than a consumer product with product-market fit. And that, for me, is enough to bring back an old Stratechery standby: the keynote day-after.

Keynote Metaphysics and GPT-4 Turbo

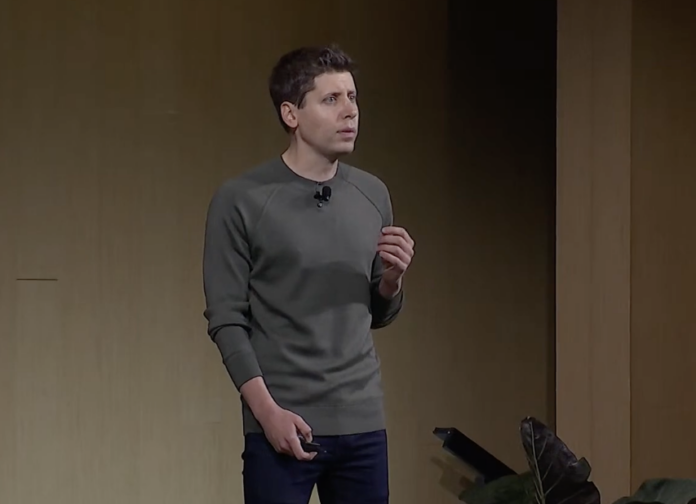

This was, first and foremost, a really good keynote, in the keynote-as-artifact sense. CEO Sam Altman, in a humorous exchange with Microsoft CEO Satya Nadella, promised, “I won’t take too much of your time”; never mind that Nadella was presumably in San Francisco just for this event: in this case he stood in for the audience who witnessed a presentation that was tight, with content that was interesting, leaving them with a desire to learn more.

Altman himself had a good stage presence, with the sort of nervous energy that is only present in a live keynote; the fact he never seemed to know which side of the stage a fellow presenter was coming from was humanizing. Meanwhile, the live demos not only went off without a hitch, but leveraged the fact that they were live: in one instance a presenter instructed a GPT she created to text Altman; he held up his phone to show he got the message. In another a GPT randomly selected five members of the audience to receive $500 in OpenAI API credits, only to then extend it to everyone.

New products and features, meanwhile, were available “today”, not weeks or months in the future, as is increasingly the case for events like I/O or WWDC; everything combined to give a palpable sense of progress and excitement, which, when it comes to AI, is mostly true.

GPT-4 Turbo is an excellent example of what I mean by “mostly”. The API consists of six new features:

- Increased context length

- More control, specifically in terms of model inputs and outputs

- Better knowledge, which both means updating the cut-off date for knowledge about the world to April 2023 and providing the ability for developers to easily add their own knowledge base

- New modalities, as DALL-E 3, Vision, and TTS (text-to-speech) will all be included in the API, with a new version of Whisper speech recognition coming.

- Customization, including fine-tuning, and custom models (which, Altman warned, won’t be cheap)

- Higher rate limits

This is, to be clear, still the same foundational model (GPT-4); these features just make the API more usable, both in terms of features and also performance. It also speaks to how OpenAI is becoming more of a product company, with iterative enhancements of its core functionality. Yes, the mission still remains AGI (artificial general intelligence), and the core scientific team is almost certainly working on GPT-5, but Altman and team aren’t just tossing models over the wall for the rest of the industry to figure out.

Price and Microsoft

The next “feature” was tied into the GPT-4 Turbo introduction: the API is getting cheaper (3x cheaper for input tokens, and 2x cheaper for output tokens). Unsurprisingly this announcement elicited cheers from the developers in attendance; what I cheered as an analyst was Altman’s clear articulation of the company’s priorities: lower price first, speed later. You can certainly debate whether that is the right set of priorities (I think it is, because the biggest need now is for increased experimentation, not optimization), but what I appreciated was the clarity.

Continue The OpenAI Keynote here >