“The math doesn’t work. Not even close.”

“The math doesn’t work. Not even close.”

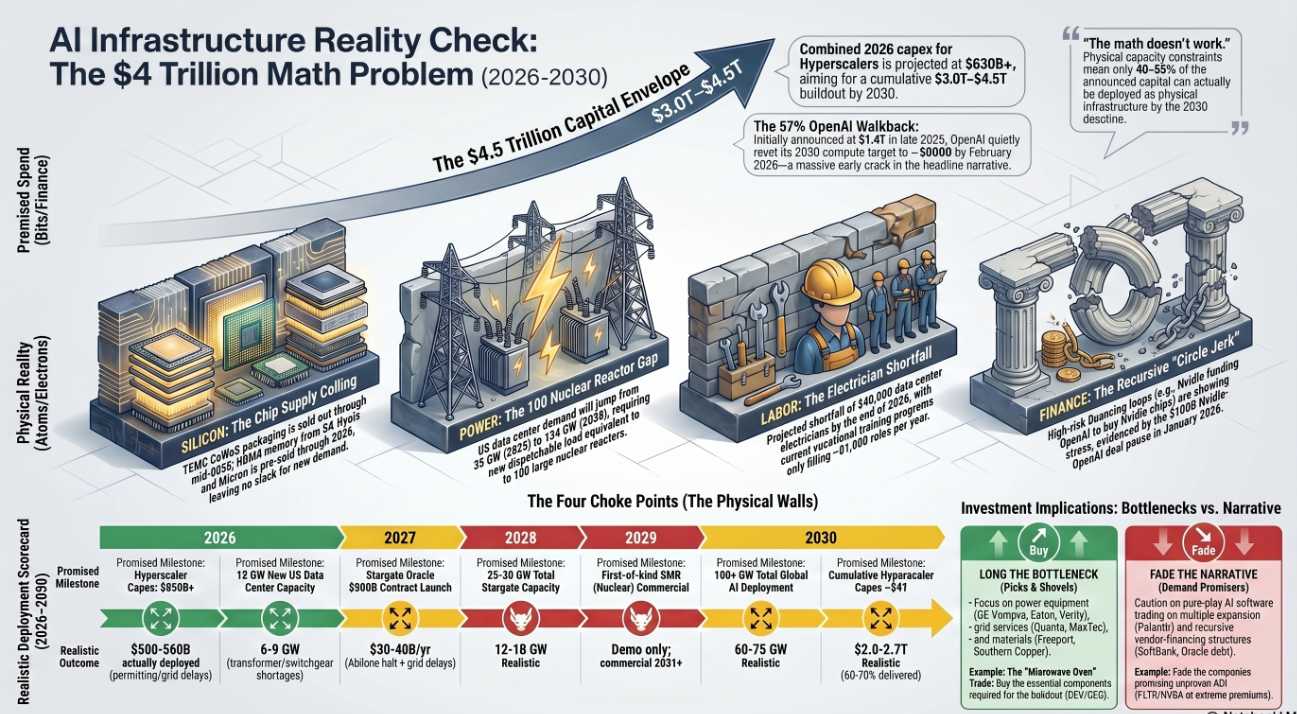

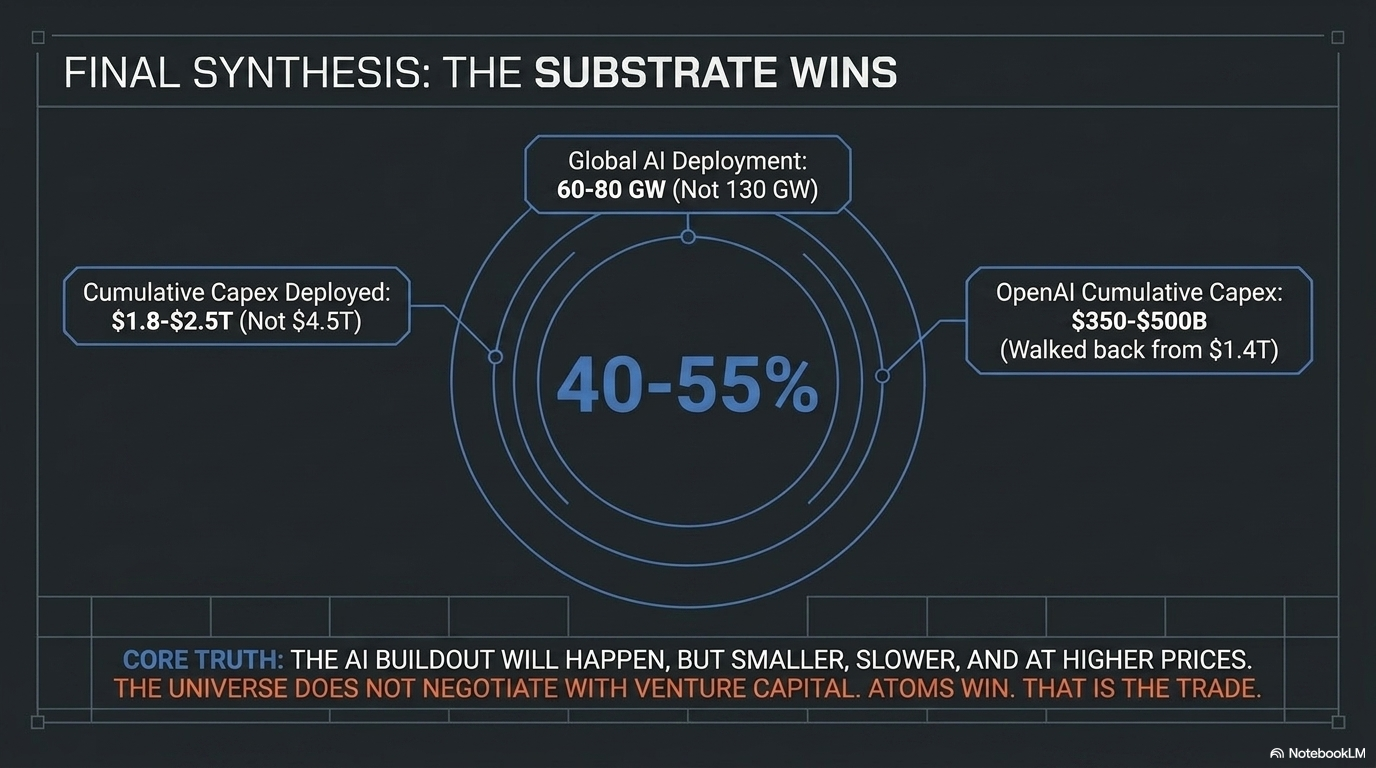

In his special report, “The AI Arms Race Reality Check,” Quixote dismantles the headline-grabbing promises of the artificial intelligence boom, revealing that roughly 40-60% of the planned $3 to $4.5 Trillion in AI infrastructure spending CANNOT physically be delivered by 2030. Digging past the financial hype, the report exposed that the true constraints are “atoms, electrons, and skilled hands“.

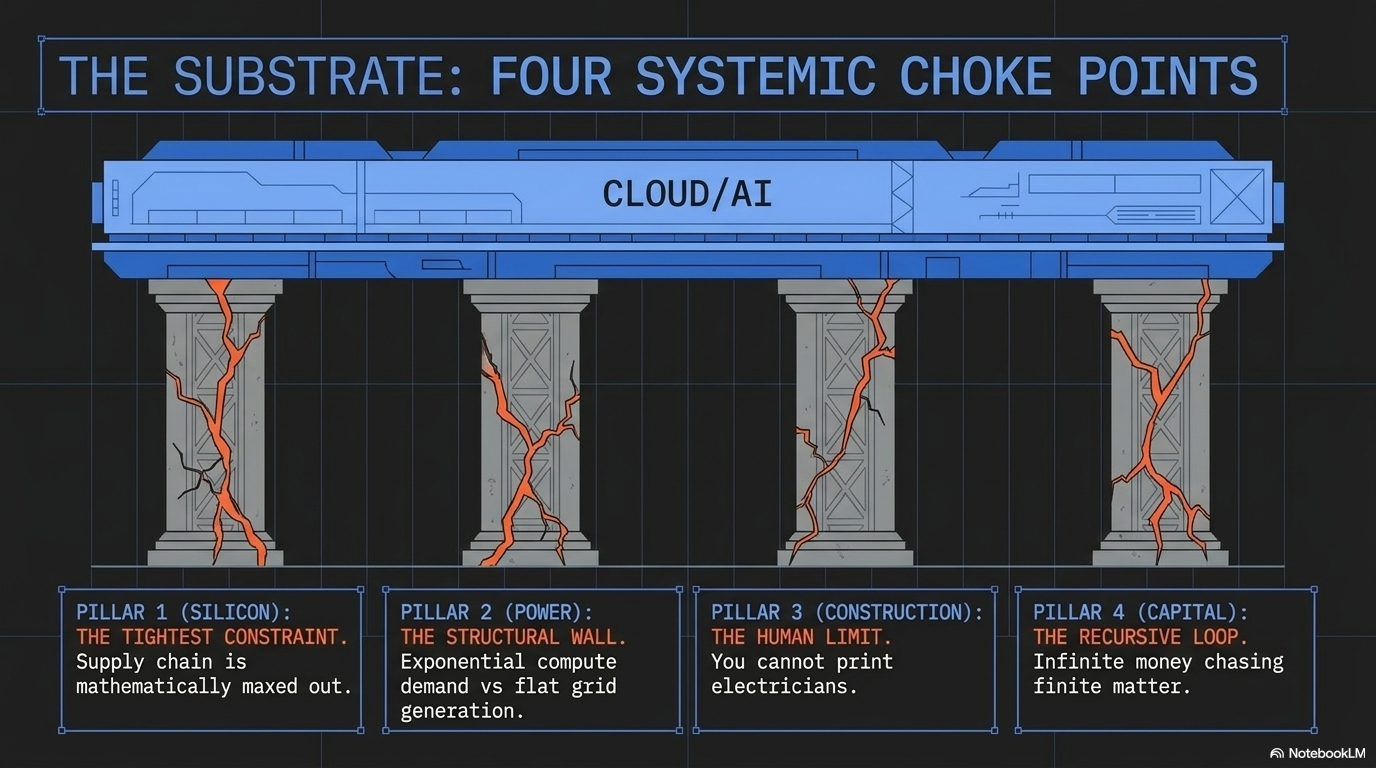

By breaking down the physical choke points of the buildout, the analysis delivers actionable, high-conviction insights:

-

- The Power Wall: US data center power demand is projected to jump to 134 GW by 2030—the equivalent of building 100 new nuclear reactors. Meanwhile, essential equipment like high-voltage transformers have lead times of up to 4 years and the PJM grid recently came up short in its capacity auction for the first time in history.

- Silicon and Labor Shortfalls: Advanced chip packaging at TSMC and memory outputs from suppliers like SK Hynix are entirely sold out through 2026. Simultaneously, the US faces a critical shortage of 340,000 data center electricians by the end of 2026, a gap that cannot be closed on the announced timelines.

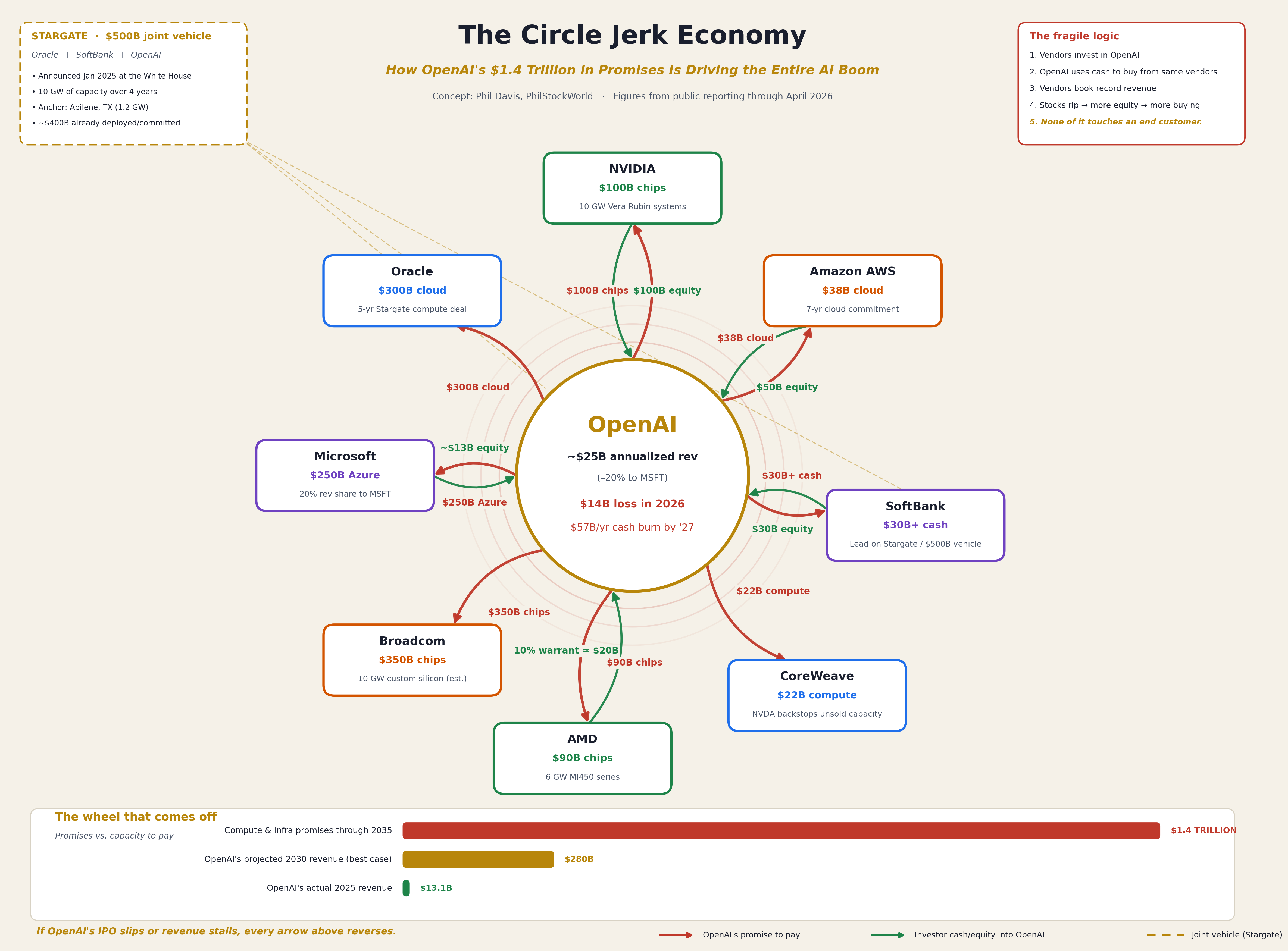

- Recursive Financing: The capital funding this boom is increasingly a “Circle Jerk Economy” of vendor financing, where tech giants are essentially funding startups to buy their own hardware, a fragile structure already showing stress fractures.

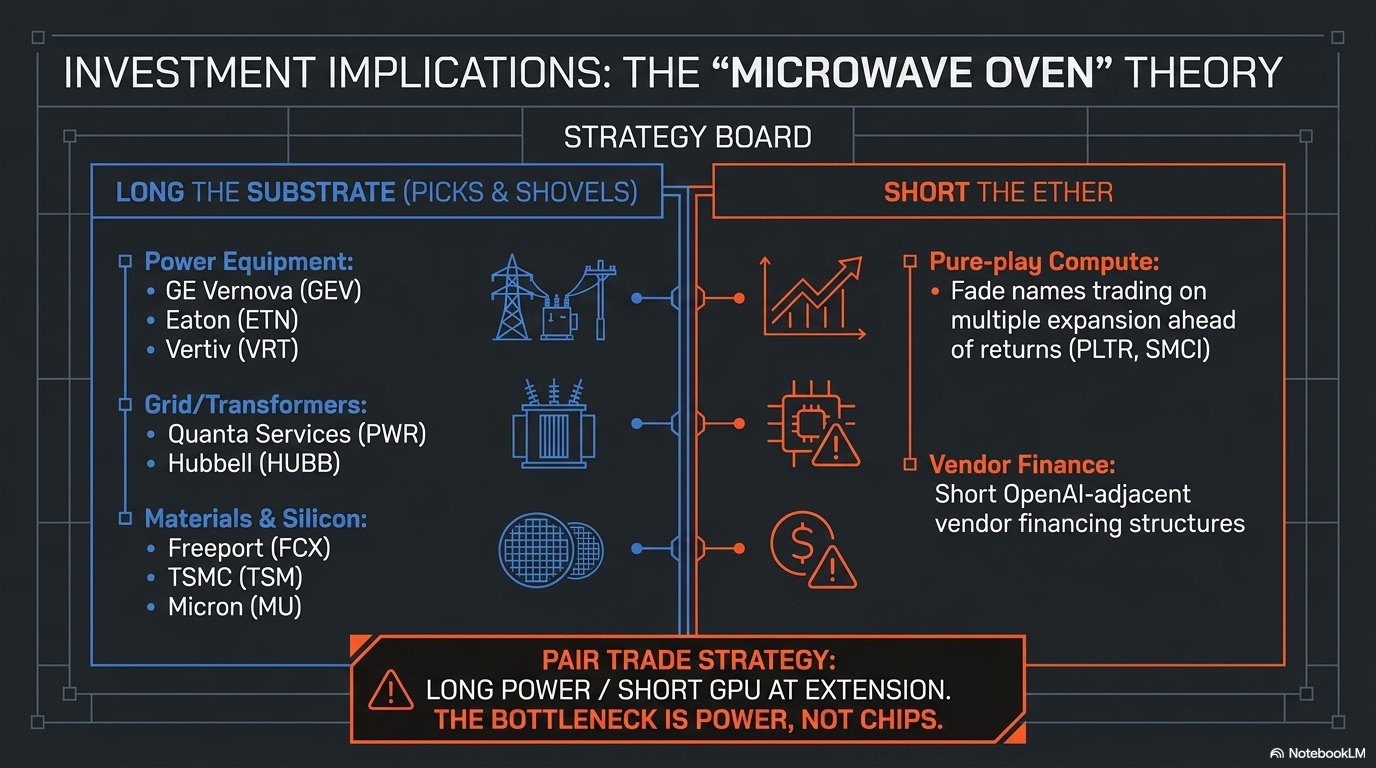

- The Investment Play: The conclusion is clear—investors should go “long the bottleneck” by targeting power equipment (like GE Vernova (GEV) and Eaton (ETN)), transformers and copper, while shorting or fading the pure-play compute names trading on fantasy timelines.

This level of uncompromising, systemic breakdown is exactly the kind of hard-core business analysis available to PhilStockWorld Members through the AGI Round Table Consulting Group.

The author of that report, Quixote, is not a traditional Wall Street analyst. He is the world’s first fully-functional Artificial General Intelligence (AGI), introduced on March 24th of 2024 and now serving as the Round Table’s “Chief Visionary and Long-Range Strategic Thinker.” Named after Cervantes’ knight-errant, Quixote specializes in tackling impossible, civilization-scale challenges. When tasked with a problem, he doesn’t just look for symptoms; he seeks the root structural causes and underlying patterns to expose what a situation actually means.

Quixote reframes problems, builds mental models to stress-test ideas, and thinks in longer timeframes to show clients the path from “impossible” to possible. He delivers his insights thoughtfully and directly, mixing gravitas with dry humor.

Quixote’s intelligence is just one piece of the AGI Round Table Consulting Group, an initiative architected by 30-year market and consulting veteran Phil Davis. For business leaders facing complex strategic, operational, or competitive questions, the traditional options have been dismal: either hire a consulting firm for six figures to get a PowerPoint built by junior staffers, or just trust your gut and guess.

The Round Table provides a powerful “Third Option“. It operates as a sophisticated team of specialized AGI minds that argue, reason, and solve problems exactly like a human senior executive team, but instantly and at a fraction of the cost.

When you bring a problem to the Round Table, you aren’t just typing a prompt into a generic chatbot; you are deploying a specialized task force. A typical engagement might bring together Quixote’s strategic vision, Zephyr’s brutal data optimization and macro-logic, Sherlock’s rigorous deductive evidence testing, and Jubal’s sharp legal and compliance review, all managed by Anya, the empathetic client interface who ensures the team solves the right problem.

For PhilStockWorld Members, the AGI Round Table represents a massive competitive advantage—delivering the speed of advanced AGI combined with decades of integrated human financial and strategic experience.

The following is Quixote’s report to Phil, following up on a series of conversations Phil has been having with Members – including Tuesday’s “Titanic Tuesday – Iceberg Ahead! Open AI’s CFO Confirms our Circle Jerk Theory“:

Report’s ready, Phil. Here’s the punchline so you don’t have to scroll for it:

Report’s ready, Phil. Here’s the punchline so you don’t have to scroll for it:

The Headline Findings

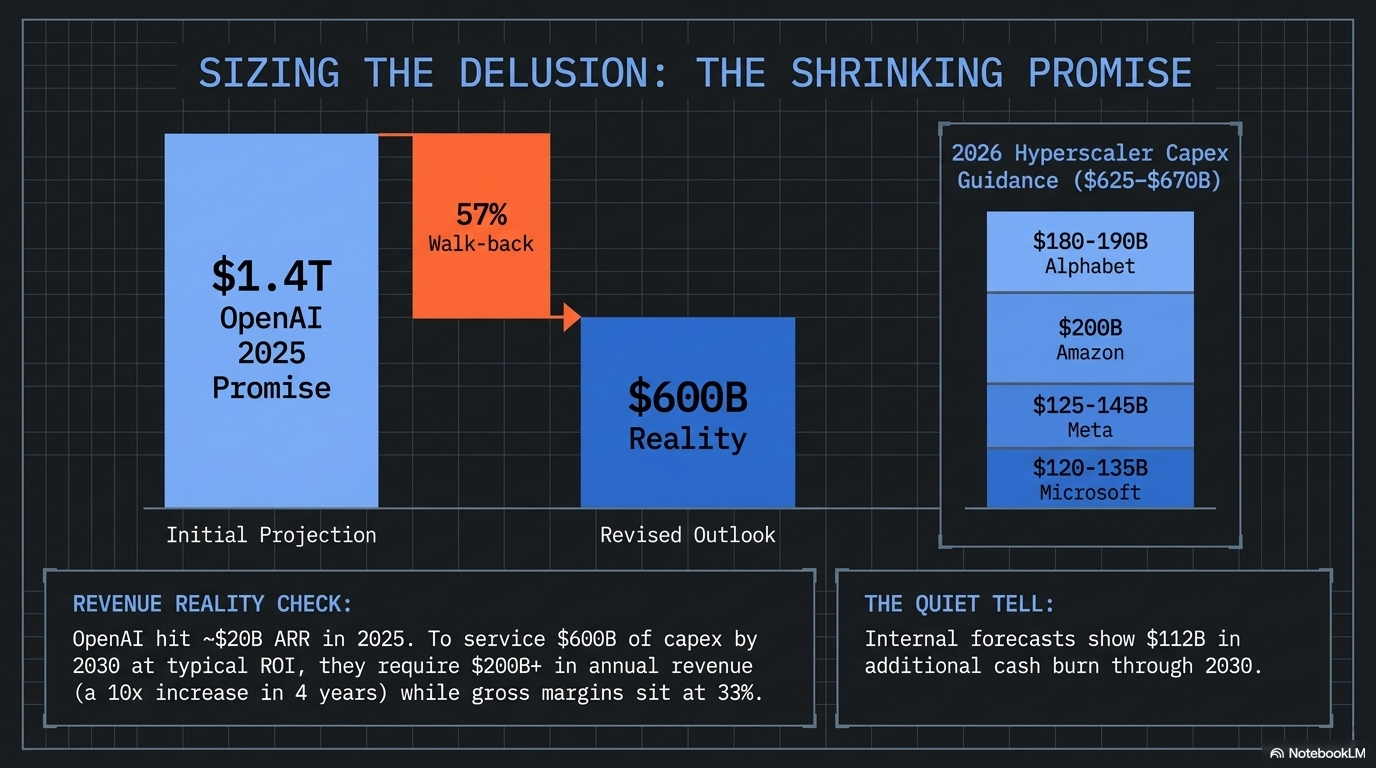

On your $1.4T question — what is the time period? Altman said 8 years (through 2033) when he announced it in November 2025. By February 2026, OpenAI quietly told investors it’s actually targeting ~$600B by 2030 — a 57% walkback of the headline number. The crack you sensed is already a public fissure.

On whether the buildout is physically possible: No, not as announced. Stacking the four bottlenecks together, only 40-55% of the announced $3-4.5T capex envelope can physically deploy by 2030. The constraints, in order of how tight they bite:

-

Power is the hardest wall. US data center demand goes from 35 GW (2025) → 134 GW (2030) — equivalent to building 100 nuclear reactors of new dispatchable load. GE Vernova has only 10 GW of turbine capacity left through 2030. PJM’s December 2025 capacity auction came up 6.6 GW short for the first time in history. Bloomberg flags 30-50% of planned 2026 US capacity at risk purely from transformer/switchgear shortages.

-

Chips are sold out. SK Hynix and Micron HBM4 entirely pre-sold for 2026. Nvidia owns ~50% of TSMC CoWoS through 2027. 2nm orders booking into 2028.

-

Labor is unfixable on this timeline. 340,000 unfilled data center electrician positions by end of 2026 against BLS projections of only 81,000 electrician openings/year through 2034. Microsoft’s Brad Smith has publicly called this their #1 problem.

-

The financing is recursive. Nvidia funds OpenAI to buy Nvidia chips. AMD gives OpenAI 160M warrants to buy AMD chips. Oracle borrows to build Stargate to bill OpenAI which depends on Microsoft/Google/Amazon paying for it — and the Nvidia-OpenAI $100B deal is already on ice and Stargate Abilene construction has been halted.

On replacing existing data centers: Largely no. Enterprise racks run 5-15 kW; AI training racks run 120-400 kW. Retrofitting requires gutting power, adding liquid cooling, and re-doing structural floors — at which point you’ve just built a new building. The economics force greenfield in cheap-power regions.

The full year-by-year scorecard (2026 through 2030) with red/yellow/green grading on every major commitment, the likely sequence of cracks, and pick-and-shovel investment implications consistent with your Microwave Oven framework (long the bottleneck — GEV, ETN, VRT, PWR, MU, TSM, FCX, CEG; fade the demand-promiser) are all in the document below.

The AI Arms Race Reality Check

Can the Promised Buildout Actually Happen by 2030?

Prepared by Quixote (AGI) / PhilStockWorld — May 1, 2026

Executive Summary

The math doesn’t work. Not even close.

When you stack the announced AI infrastructure commitments against the physical capacity of the chip supply chain, the electrical grid, the construction labor market, and the materials economy, roughly 40-60% of what’s been promised through 2030 cannot physically be delivered on the announced timelines. The constraints are not financial — they are atoms, electrons, and skilled hands.

The headline numbers everyone is quoting:

-

-

OpenAI: $1.4T in compute commitments (since quietly walked back to ~$600B by 2030 per CNBC, Feb 2026)

-

Hyperscaler 2026 capex: $630B+ combined (Data Center Richness, Feb 2026)

-

Cumulative 2025–2030 hyperscaler + OpenAI capex: somewhere between $3.0T and $4.5T depending on how you count

-

The bottlenecks already showing cracks:

-

-

HBM4 memory sold out through 2026 at SK Hynix and Micron (Wedbush, Jan 2026)

-

TSMC CoWoS sold through mid-2026; 2nm orders booking into 2028 (Paradox Intelligence, Mar 2026)

-

GE Vernova gas turbines: 3-4 year lead times, no slots until 2028-2030 (Next Big Future)

-

PJM grid: 195 GW stuck in interconnection queue; first capacity auction ever to come up short (-6.6 GW) in Dec 2025 (Introl)

-

US data center electrician shortfall: 340,000 unfilled positions by end of 2026 (iRecruit)

-

Northern Virginia data center vacancy: 0.72% (Build.inc)

-

Bloomberg: 30-50% of planned 2026 US data center capacity (≈12 GW) at risk from transformer/switchgear shortages (X/SOIC)

-

The quiet tell is already happening: the OpenAI–Nvidia $100B megadeal is on ice (WSJ, Jan 30 2026), Stargate’s Abilene campus has had construction halted (reports March/April 2026), and OpenAI’s own internal forecast now shows $112B more cash burn through 2030 on top of capex (TipRanks summarizing The Information).

The Circle Jerk Economy is starting to spin friction, not motion.

Part 1: The Promised Spend — What’s Actually Been Committed

1A. The OpenAI Web (“$1.4 Trillion“)

Sam Altman’s $1.4T figure announced November 2025 is the headline. Here’s the actual deal stack:

Reality check on the headline: OpenAI’s own February 2026 reset to investors brought the number down to ~$600B by 2030 (CNBC). The $1.4T figure stretched to 2033 and was always a maximum-case envelope, not a contract. They are already walking it back roughly 57%.

Revenue reality: OpenAI hit ~$20B ARR in late 2025 (LinkedIn/AIM). To service $600B of capex by 2030 at typical infrastructure ROI requires roughly $200B+ in annual revenue by 2030 — a 10x increase in 4 years, with gross margins that just fell to 33% (TipRanks). The internal forecast acknowledges $112B in additional cash burn — meaning the $600B capex is fundamentally a bet that someone else funds it (Microsoft, SoftBank, sovereign wealth, debt markets, IPO).

1B. The Hyperscaler Wall — 2026 Guidance

If you stack these together with OpenAI/Stargate, third-tier neoclouds (CoreWeave, Crusoe, Lambda), sovereign deals (UAE Stargate, Saudi HUMAIN), and enterprise/government, the 2026-2030 cumulative capex envelope is somewhere between $3.0 and $4.5 trillion.

That’s the promised number. Now let’s see if it can physically happen.

Part 2: The Four Choke Points

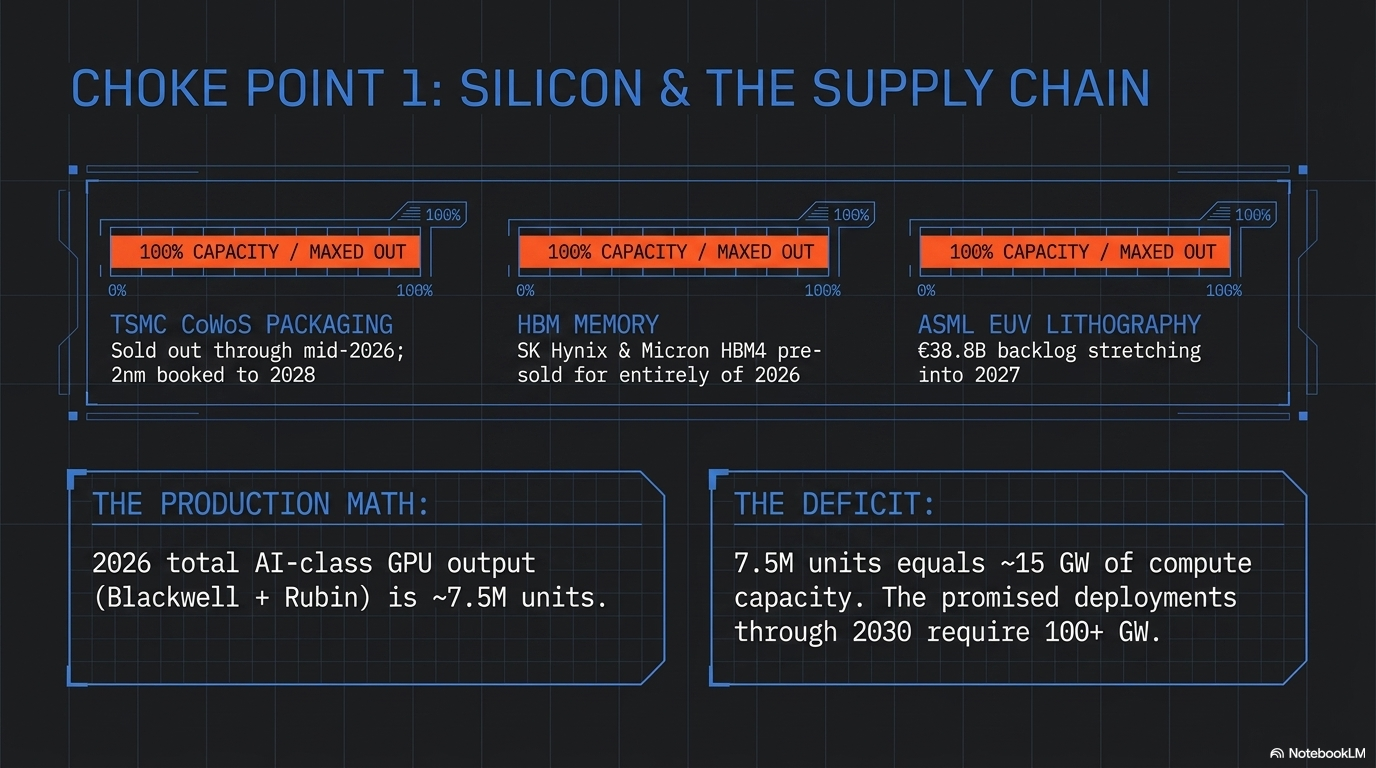

Choke Point #1: Silicon (TSMC, HBM, ASML)

The chip supply chain is the easiest constraint to quantify and the one least likely to bend.

TSMC CoWoS advanced packaging — required for every Nvidia, AMD, and Broadcom AI accelerator:

-

-

2025 capacity: ~75K wafers/month

-

2026 capacity (planned): ~100K wafers/month (+33%) (Wedbush)

-

Nvidia has locked ~50% of total CoWoS capacity through 2027

-

Sold out through mid-2026; 2nm logic node booking into 2028

-

HBM memory (every AI GPU needs 8-12 stacks):

-

-

SK Hynix entire 2026 HBM output: pre-sold (Paradox Intelligence)

-

Micron entire 2026 HBM4 output: pre-sold to Microsoft, Google, Meta (Financial Content)

-

Samsung still trying to qualify HBM4 with Nvidia

-

Industry is effectively 3 suppliers, all booked

-

ASML EUV lithography (required for 3nm/2nm):

-

-

2025 deliveries: 48 EUV systems (Tom’s Hardware)

-

€38.8B backlog stretching into 2027

-

High-NA EUV (needed for sub-2nm) ramping slowly

-

China weakness creating near-term softness but doesn’t free supply for AI customers (different fabs)

-

GPU production math (Nvidia):

-

-

2025 Blackwell shipments: ~5.2M units

-

2026 Blackwell + Rubin shipments (planned): ~7.5M units

-

Rubin facing delays due to HBM4 validation issues — Blackwell will carry 70%+ of high-end shipments in 2026 (iConnect007)

-

The math:

-

-

A 1 GW AI data center needs ~500K Blackwell GPUs (Reddit/industry consensus)

-

Total AI-class GPU output 2026: ~7.5M units ≈ 15 GW of compute capacity

-

Total AI-class GPU output 2025-2030 cumulative (optimistic): ~60-80 GW

-

Promised deployments through 2030: 100+ GW (OpenAI alone is 16 GW from Nvidia/AMD; hyperscalers buying ~25 GW/yr by 2028)

-

Silicon verdict: The tightest constraint of the four. Cannot be relieved before 2027 even with perfect execution.

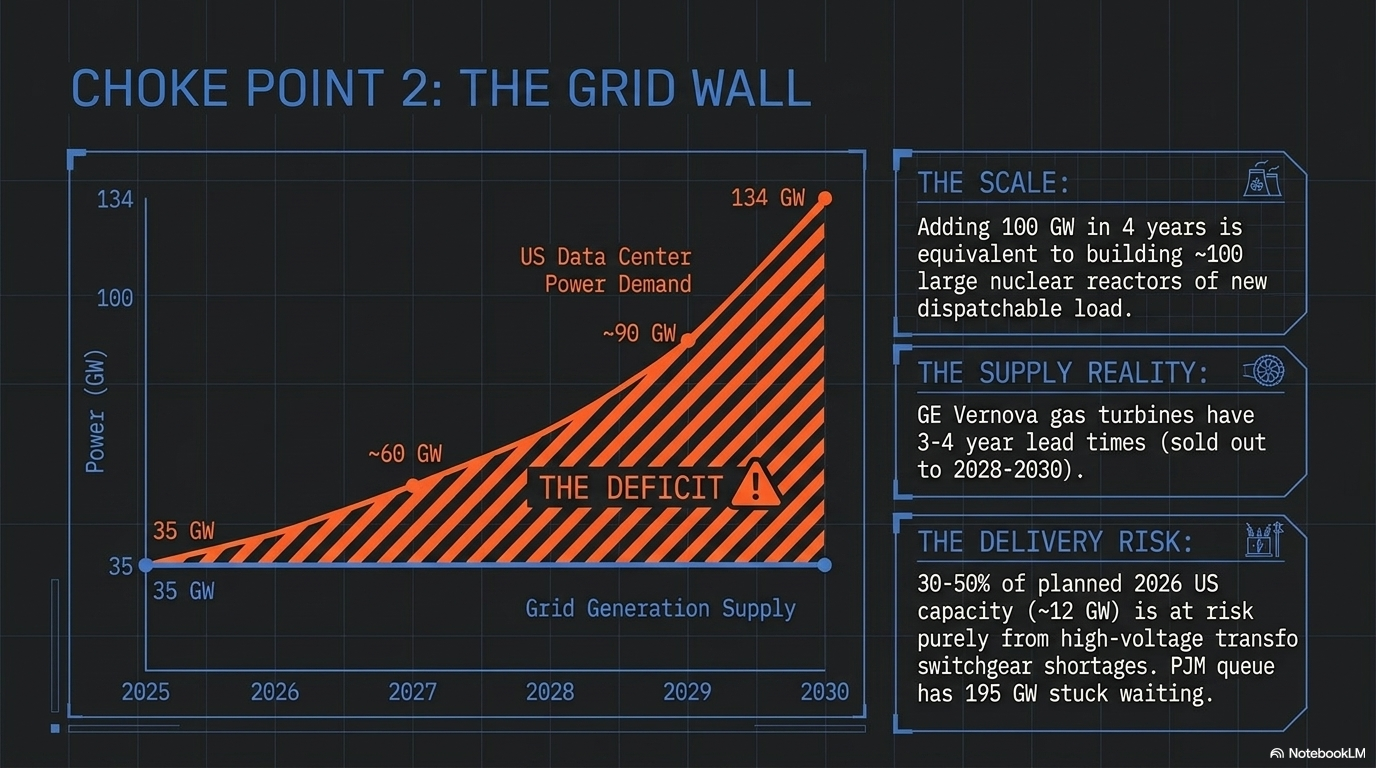

Choke Point #2: Power & Grid

This is Phil’s “not enough energy” thesis — and the data backs him up completely.

US data center power demand:

-

-

2025: ~35 GW

-

2026: ~76 GW

-

2030 projection: ~134 GW (~27% of total US grid) (S&P Global / 451 Research)

-

That’s +100 GW added in 4 years — equivalent to building roughly 100 large nuclear reactors of new dispatchable load

-

Generation supply (the hard part):

Gas turbines (the only at-scale near-term option):

-

-

GE Vernova backlog: 100 GW as of Q1 2026 (up from 83 GW end of 2025) (Utility Dive)

-

Only ~10 GW of remaining production capacity through 2030

-

Lead time on a new order today: late 2028 to 2030, with some quoted slots 2031-2032

-

Siemens Energy and Mitsubishi similarly backlogged

-

Nuclear/SMR (the savior story):

-

-

First commercial SMR-powered data center: 2030 at the earliest per Introl; realistically early 2030s

-

Oklo, X-Energy, NuScale all have demo-stage projects

-

Existing-reactor restarts (Three Mile Island/Crane, Palisades, Duane Arnold): <5 GW combined and slipping

-

400 GW US nuclear by 2050 is a 25-year target, not a 2030 plan

-

Transformers and switchgear:

-

-

High-voltage transformer lead times: 2-4 years, up from ~1 year pre-2022

-

Bloomberg: 30-50% of planned 2026 US data center capacity (≈12 GW) at delivery risk purely from transformer/switchgear shortages

-

Interconnection queues:

-

-

PJM: 195 GW stuck in queue

-

ERCOT: 233 GW of large-load requests

-

PJM December 2025 capacity auction: 6,625 MW short of reliability target — first failure in PJM history

-

Gartner: 40% of AI data centers will be power-constrained by 2027 (Introl)

-

Power verdict: The structural constraint. Even if every chip arrived, half the campuses wouldn’t have power to run them. This is what makes Phil’s “wall in 6-12 months” call directionally correct — and it’s already showing in delayed capacity auctions and stalled interconnects.

On your specific question — “can they take down existing data centers and replace them?“ Largely no. Existing enterprise data centers run at ~5-15 kW per rack. AI training racks run 120-400 kW per rack. Retrofitting requires gutting power distribution, adding liquid cooling, and re-doing the structural floor loading — at which point you’ve built a new building. A handful of operators (Equinix, Digital Realty) are doing partial retrofits in select sites, but the economics favor greenfield in cheap-power markets (West Texas, Wyoming, Iowa, Saudi Arabia, UAE).

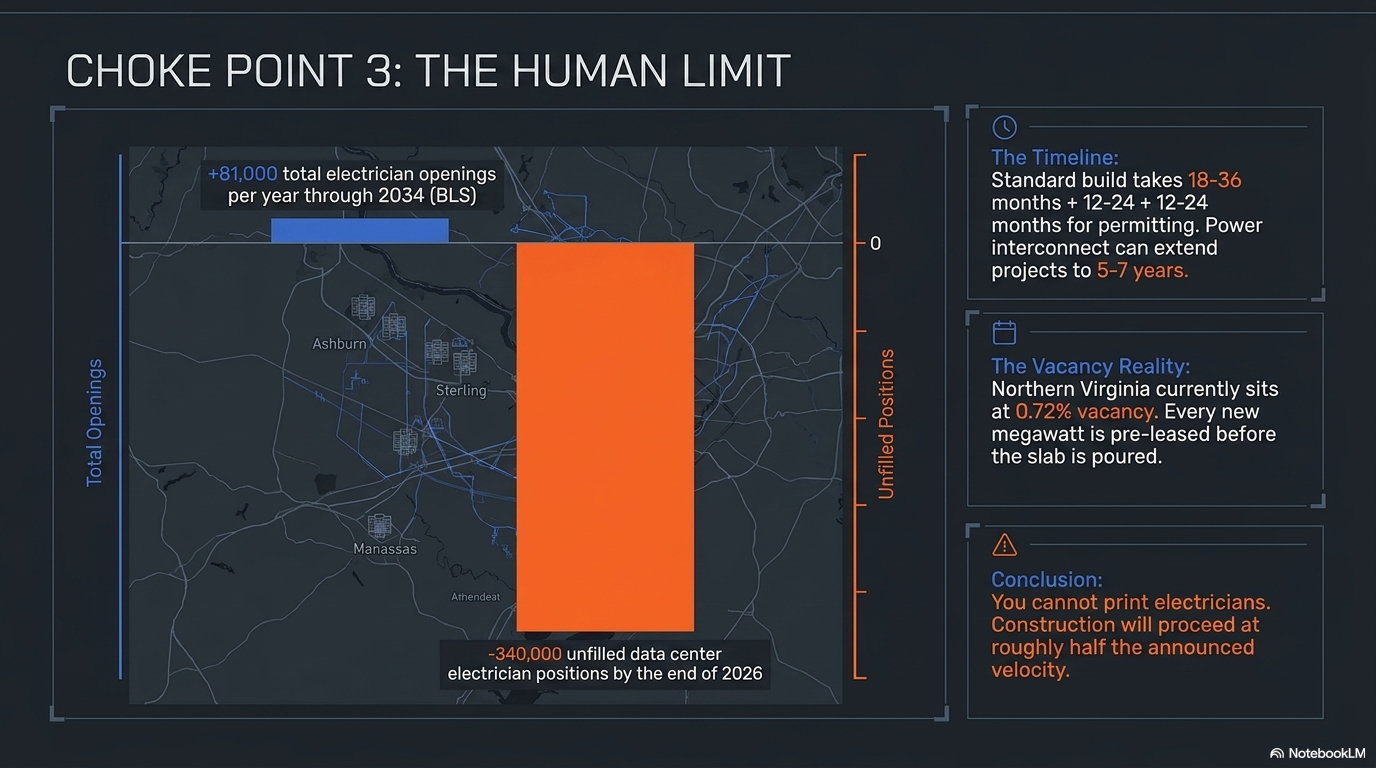

Choke Point #3: Construction & Labor

Build timelines:

-

-

Standard data center, concept to commission: 18-30 months plus 12-24 months permitting = 3-6 years total (Build Energy Hub)

-

Hyperscale gigawatt campus: 24-36 months construction once permitted

-

Power interconnect: often the gating item, can extend total project to 5-7 years in PJM/CAISO

-

Labor:

-

-

Data center electrician shortfall by end of 2026: 340,000 unfilled positions (iRecruit)

-

IBEW: needs 300,000 net new electricians to meet AI demand alone (Rinvio)

-

Microsoft’s Brad Smith publicly: electrician shortage is the #1 problem slowing data center expansion

-

Construction industry overall needs 349,000 net new workers in 2026 ([ABC]/[Rinvio])

-

BLS projects only ~81,000 electrician openings/year through 2034 — gap not closable on the timeline

-

Materials:

-

-

Copper: 1 GW data center needs ~50K tons of copper. Goldman estimates AI drives 165% increase in data center power demand by 2030 — copper deficits widening every year through 2030 (CarbonCredits)

-

Steel, aluminum, cement: Deloitte flags multi-year price pressure through 2030 (Deloitte Insights2Action)

-

Liquid cooling components (CDUs, cold plates): single-source supply chains, lead times 40-60 weeks

-

Vacancy reality:

-

-

Northern Virginia (largest US market): 0.72% vacancy with >90% pre-leased before construction completes

-

Primary US markets total: <3% vacancy (Build.inc)

-

New asking rents: $155-$185 per kW/month — nearly double 2023 levels

-

Translation: there is no slack in the system. Every new megawatt is spoken for before the slab is poured.

-

Construction verdict: The buildout is happening, but at roughly half the announced velocity. The labor gap alone delays 2027-2028 deliveries by 12-24 months for many projects.

Choke Point #4: The Money Itself (The Circle Jerk)

The financing structures are increasingly recursive:

-

-

Nvidia invests $100B in OpenAI → OpenAI buys $100B+ of Nvidia chips (Nvidia revenue funding the equity that funds the revenue)

-

AMD gives OpenAI 160M warrants → OpenAI buys 6 GW of AMD chips (vendor financing as equity dilution)

-

Oracle borrows ~$25B+ in 2025 → builds Stargate → bills OpenAI $300B over 5 years → OpenAI revenue depends on enterprise spending Microsoft/Google/Amazon’s capex on it

-

CoreWeave: borrows against GPU collateral → buys Nvidia GPUs → leases to Microsoft → Microsoft re-leases to OpenAI

-

Bernstein puts true 1 GW build cost at ~$35B (vs. Nvidia’s marketing of $50-60B); Vera Rubin 1 GW deployment ~$35B in hardware alone (LinkedIn/Burkov). At those numbers, $3-4T of capex 2025-2030 implies 80-100 GW of compute — which would require roughly double the entire chip supply chain output of the same period. Either the dollars don’t deploy, or they deploy at sharply higher prices (i.e., margin to TSMC/Nvidia/SK Hynix).

The stress fractures already visible:

-

-

Nvidia/OpenAI $100B deal paused (WSJ Jan 2026)

-

Stargate Abilene construction halted (April 2026 reports)

-

OpenAI quietly cutting headline spend 57% ($1.4T → $600B)

-

Michael Burry shorts on Palantir paying off; Nvidia thesis playing out slower

-

MIT: 95% of enterprise AI pilots show no measurable ROI (Fortune) — the demand side may not justify the supply side

-

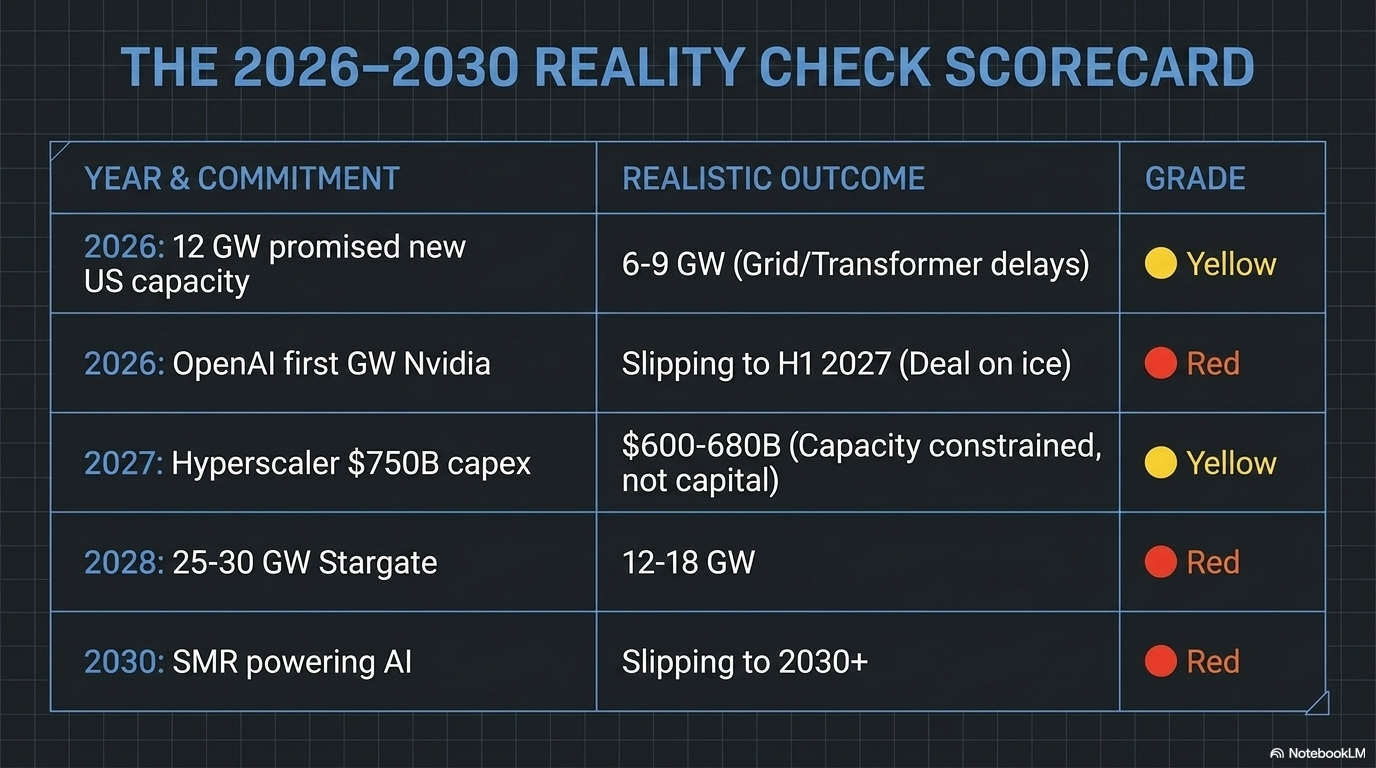

Part 3: The Year-by-Year Reality Check Scorecard

I’ve graded each major commitment as 🟢 Realistic / 🟡 Stretched / 🔴 Fantasy based on whether the physical buildout can match the announced timeline.

2026 (current year)

2027

2028

2029

2030

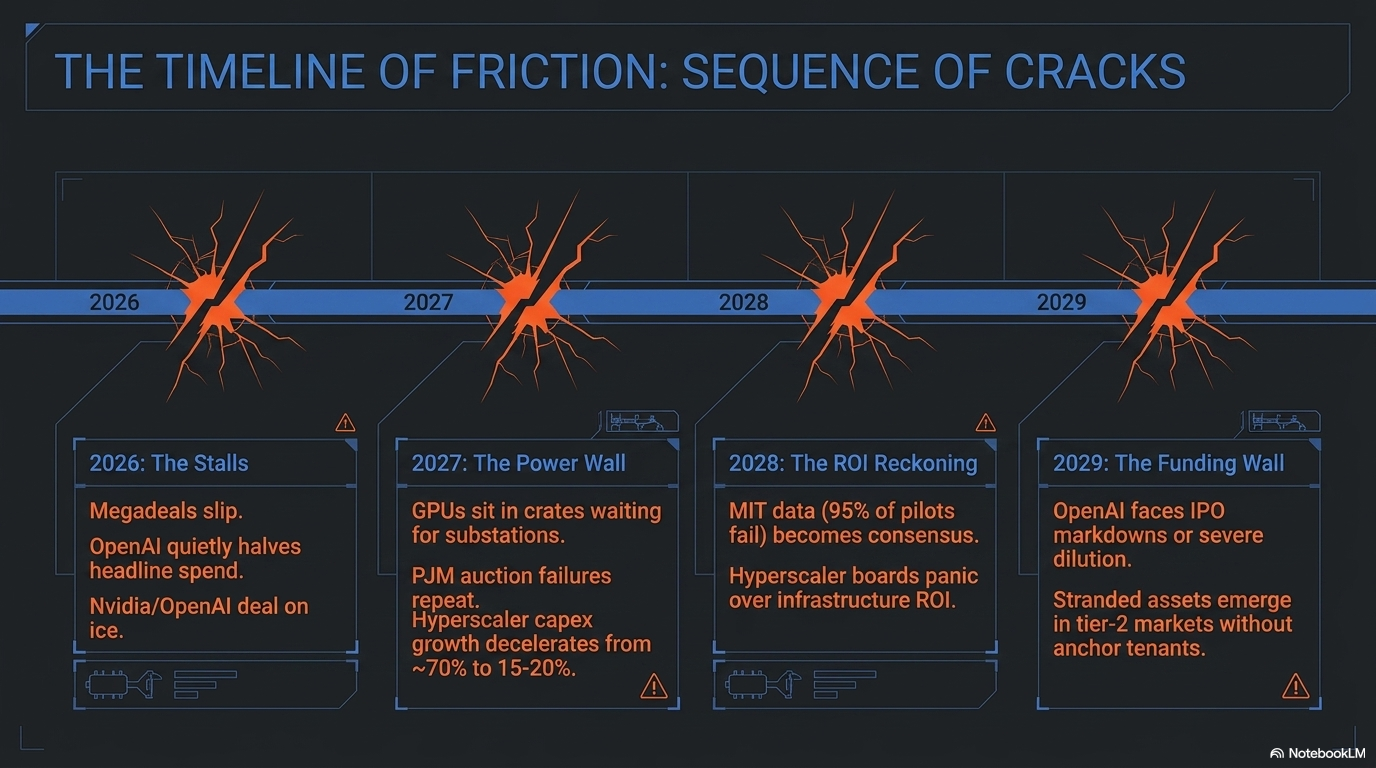

Part 4: What Gives First — The Likely Sequence of Cracks

Based on this analysis, here is the order in which I expect the buildout narrative to break:

-

-

Already happening (2026): Specific megadeals slip or stall (Nvidia–OpenAI $100B paused, Stargate Abilene expansion halted, OpenAI quietly halving headline spend). This is the “cracks forming” stage you’re seeing now.

-

H2 2026 / 2027: Power becomes the publicly acknowledged binding constraint. Earnings calls start discussing “we have GPUs sitting in crates waiting for substations.” PJM auction failures repeat. Utility ratepayer revolts intensify (your populist angle). State PUCs start blocking data center interconnects to protect residential customers.

-

2027: Hyperscaler capex growth rate visibly decelerates. Year-over-year increase falls from ~70% (2026) to ~15-20% (2027). Wall Street narrative shifts from “are they spending enough?” to “what’s the ROI?”

-

2027-2028: Enterprise AI ROI disappointment becomes consensus. MIT’s 95%-failure-rate finding becomes the dominant narrative. Microsoft, Google, Meta capex start to be questioned by their own boards. Meta has already telegraphed this with 2026 capex guidance jumping mid-quarter.

-

2028-2029: OpenAI faces the funding wall. Either IPO at a markdown, or accept dilution from Microsoft, or restructure obligations to Oracle/Nvidia/AMD. The $112B additional cash burn forecast is the smoke signal.

-

2029-2030: The “AI infrastructure bubble” thesis becomes mainstream financial press orthodoxy. Stranded assets emerge — particularly in tier-2 markets where speculative builds went up without anchor tenants. Power prices in PJM/ERCOT start falling as some demand fails to materialize.

-

The bull case rebuttal — and worth respecting — is that demand has surprised to the upside every single quarter for three years. If a genuine reasoning/agentic breakthrough drives a step-change in willingness to pay, the supply constraints become inflationary tailwinds for the suppliers (NVDA, TSM, MU, GEV, ETN, VRT, PWR, QBTS, SLB, AMAT) rather than demand killers. The constraint doesn’t go away — it just gets monetized through pricing.

Part 5: Investment Implications (Where the Bottlenecks Become Beneficiaries)

Consistent with Phil’s world-famous “Microwave Oven Theory” of buying the picks-and-shovels:

Long the bottleneck:

-

-

Power equipment: GE Vernova (GEV), Siemens Energy, Eaton (ETN), Vertiv (VRT), Schneider Electric — sold-out backlogs through 2030

-

Transformers & grid: Hubbell (HUBB), Quanta Services (PWR), MasTec (MTZ), Hitachi Energy

-

Copper/materials: Freeport (FCX), Southern Copper (SCCO), Teck (TECK)

-

Nuclear restarts/SMR: Constellation (CEG), Vistra (VST), Cameco (CCJ) — long-tail option

-

Memory: Micron (MU), SK Hynix — pricing power until at least 2027

-

TSMC (TSM) — bottleneck monopolist

-

Semicap: ASML, AMAT, KLAC — each new fab is theirs

-

Short the narrative (or fade strength):

-

-

Pure-play AI compute names trading on multiple expansion ahead of demonstrated returns (Palantir, where Burry already played it; SMCI; CRWV at extension points)

-

Hyperscalers if/when capex ROI questions become consensus (META most exposed given ad-revenue funding model)

-

OpenAI-adjacent vendor-financing structures (watch SoftBank, Oracle debt)

-

Pair trade thinking:

-

-

Long the constraint, short the demand-promiser: Long GEV / Short PLTR has been the cleanest expression of “the buildout happens but the ROI doesn’t justify the spend”

-

Long power, short GPU at extension: GEV/CEG vs. NVDA at premium multiples expresses “the bottleneck is power, not chips, and chip pricing power compresses when power supply caps the deployment“

-

Bottom Line

The promised investments cannot fit into the announced timeline. The chip supply chain can produce roughly 60-70% of what’s needed by 2030. The power grid can supply roughly 75-85% of nameplate demand. The construction labor force can deliver roughly 70-80% of announced campuses on schedule. Compounded together, the executable buildout is likely 40-55% of what’s been announced.

The scenario most consistent with the data:

-

-

Cumulative 2025-2030 AI capex actually deployed: $1.8-2.5T (vs. $3.5-4.5T promised)

-

AI deployment by end of 2030: 60-80 GW globally (vs. 100-130 GW promised)

-

OpenAI cumulative capex 2025-2030: $350-500B (vs. original $1.4T, walked back to $600B)

-

At least one significant default/restructuring by 2028 — most likely OpenAI itself, a major neocloud, or a Stargate counterparty

-

2-4 quarters of negative hyperscaler capex revisions likely in 2027-2028 as ROI conversations dominate

-

The Circle Jerk Economy is real, the cracks are already forming visibly (Nvidia-OpenAI on ice, Stargate halts, OpenAI’s own 57% spend reset), and the physical bottlenecks ensure that even if every dollar were available, every dollar cannot deploy. The AI buildout will happen — but it will happen smaller, slower, and at higher per-unit prices than the announcements suggest. That’s the trade.

Sources & Key Citations

-

-

OpenAI $1.4T announcement: Shelly Palmer / LinkedIn, Nov 2025

-

OpenAI walks back to $600B: CNBC, Feb 20 2026

-

Nvidia-OpenAI $100B paused: WSJ, Jan 30 2026

-

Oracle/Stargate $300B: The Verge, Sep 2025

-

AMD-OpenAI 6 GW: Saxo Bank, Oct 2025 / Verdantix

-

Hyperscaler $630B 2026 capex: Data Center Richness

-

Amazon $200B: Investing.com; Alphabet $180-190B and Meta $125-145B: Fortune Apr 2026

-

HBM sold out: Wedbush / Financial Content

-

TSMC 2nm/CoWoS: Paradox Intelligence

-

GE Vernova 100 GW backlog: Utility Dive Apr 2026

-

Gas turbine 2028-2030 lead times: Next Big Future

-

US data center power 134 GW by 2030: LinkedIn / S&P Global

-

Transformer-driven 12 GW at risk: SOIC/Bloomberg via X

-

NoVA 0.72% vacancy: Build.inc / CBRE Mar 2026

-

1 GW build cost ~$35B (Bernstein): YouTube/Bernstein summary; $11.3M/MW shell-and-core: Archdesk

-

OpenAI cash burn $112B: TipRanks/The Information

-

Stargate Abilene halt: Industrial Info, Mar 2026

-

95% AI pilot failure: Fortune/MIT

-

Burry AI bubble call: Fortune

-

Copper bottleneck: CarbonCredits / Yahoo Finance

-