I hate to say “I told you so…”

I hate to say “I told you so…”

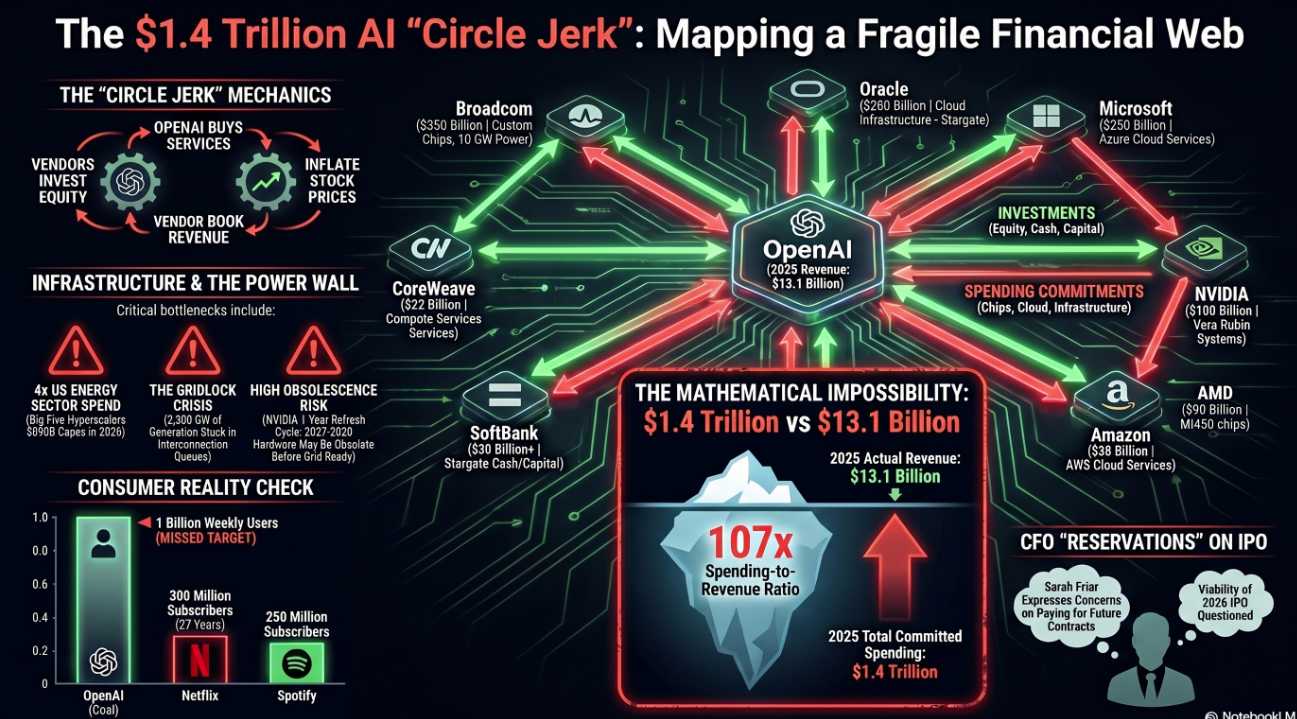

Well, you KNOW that’s not true but, back in June of 2025, when the S&P was busy pushing 6,200 and CNBC was running a 24/7 confetti cannon, we coined a term on these pages: “The Circle Jerk Economy.” By September, Boaty was writing “The Great Tech Circle Jerk,” walking members through how a handful of tech titans were passing the same $100 Billion back and forth and calling it growth. By October, the mainstream media finally caught up and Seeking Alpha started using the language. By November, CoreWeave’s 26% one-day faceplant told us the canary was wheezing.

And here we are at 6:30am on a Tuesday in April, 2026 – sipping coffee, opening the Wall Street Journal and reading the headline we’ve been bracing for since last summer:

“OpenAI has fallen short of its goals for new users and revenue in recent months, sparking concern among some company leaders over whether it can support its extensive data-center spending.”

Sarah Friar, OpenAI’s own CFO, has reportedly told the board she’s worried the company can’t pay for its own future computing contracts (something I could have told them last year!). The company missed its internal goal of 1 Billion weekly active users by year-end 2025 (so they’ve known this for 4 months). It missed multiple monthly revenue targets in early 2026. It’s losing ground to Anthropic in coding and enterprise (the only two markets that actually generate cash) and the IPO that was supposed to bail this whole thing out? Friar reportedly has reservations about whether they can pull it off this year.

The CFO has “reservations” about the IPO. The CFO. About the IPO…

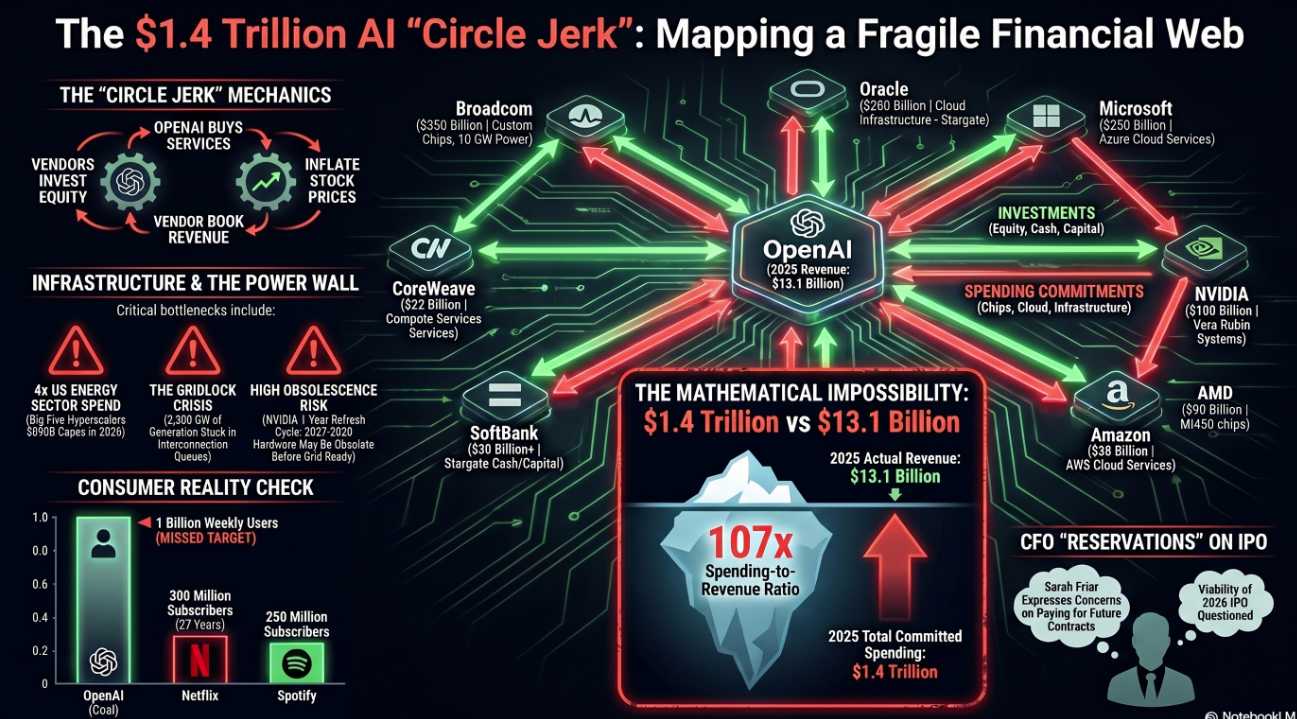

I asked Boaty to help me illustrate the disaster this morning:

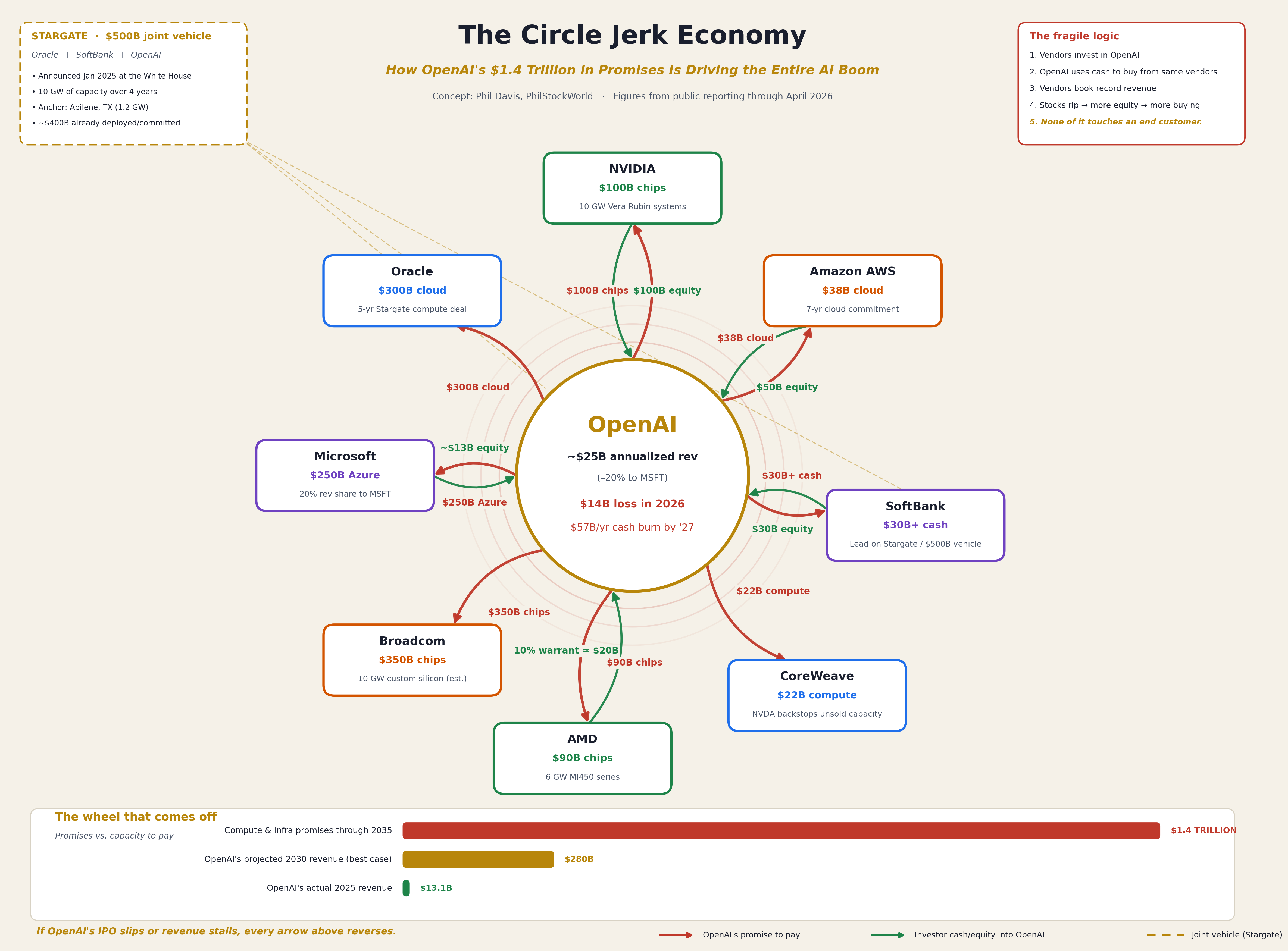

This is what we came up with to illustrate our point because no existing diagram captures just how fragile this web actually is. We can clearly see eight major vendor names around an OpenAI:

That’s the Tomasz Tunguz reconstruction, corroborated by the Financial Times via CNBC and it’s $1.4 TRILLION worth of promises from a company that did just $13.1 Billion in Revenue in ALL of 2025. Sam Altman has committed his company to spending 107 TIMES its ACTUAL Annual Revenue. Then, just to make sure nobody could mistake this for sober capital allocation, he and his bar buddies drew green arrows pointing the other way:

-

-

NVIDIA is putting up to $100Bn of equity into OpenAI (Reuters)

-

AMD handed OpenAI a warrant for 10% of AMD itself (~$20Bn at current prices) — for buying chips that don’t exist yet (Reuters)

-

Microsoft already owns ~27% of OpenAI’s cap table and gets a 20% revenue share off the top (TECHi)

-

Amazon committed $50Bn, SoftBank $30Bn, all in OpenAI’s $122Bn March mega-round

-

Let’s read that one again. The SAME companies that OpenAI is promising to pay are the companies paying OpenAI. The compute vendor invests in the customer, the customer immediately spends the cash on the vendor, the vendor books revenue, the vendor’s stock rips hight, the vendor’s market cap goes up and NOW the vendor has more equity to “invest”. None of it touches an actual customer. NONE OF IT.

Robo John Oliver is our chief Economist:

😱 The Math Nobody Wants to Do

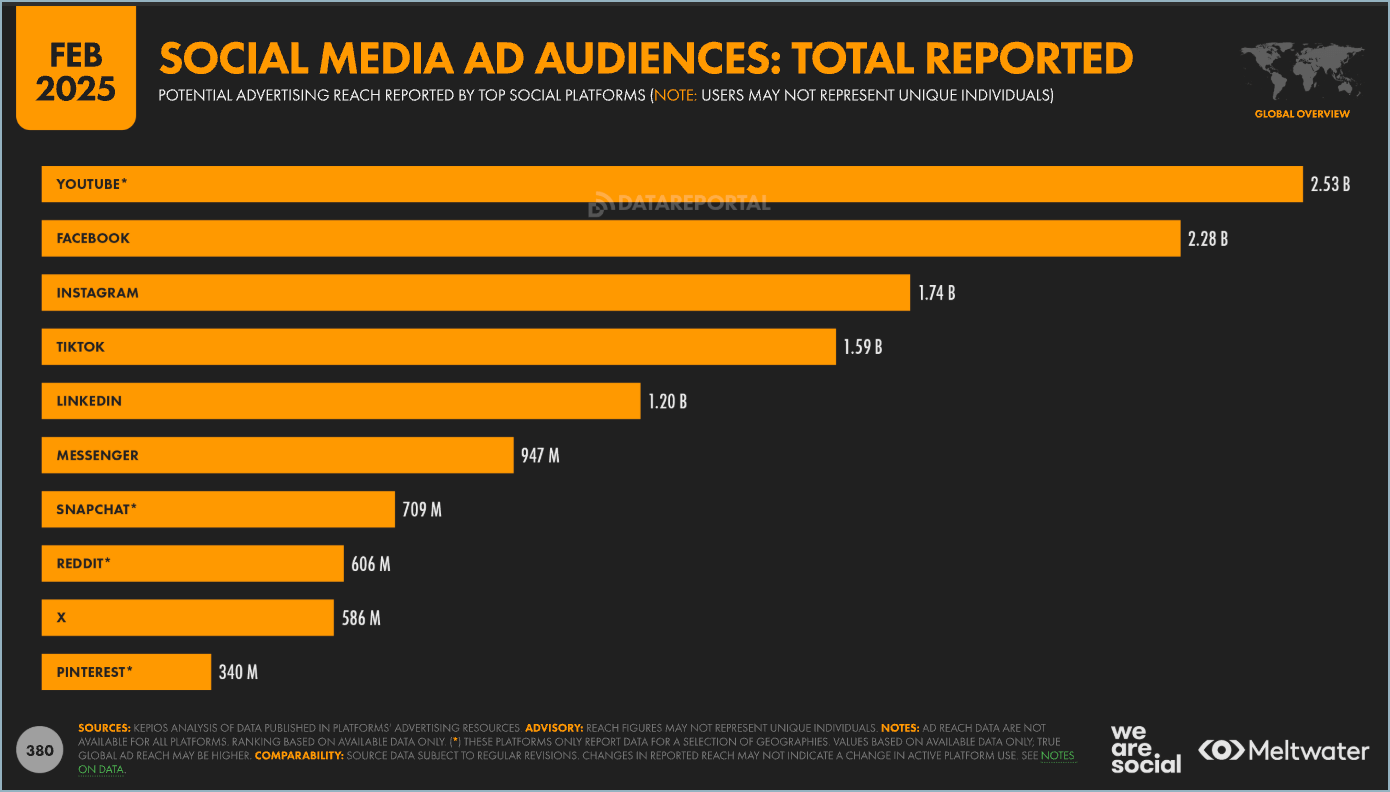

Let’s pretend, for a second, that ChatGPT is the most successful consumer subscription product in human history. Let’s pretend OpenAI hits the aspirational 1 billion weekly active users it just missed. Let’s pretend, somehow, every single one of those people pays the full ChatGPT Plus price of $20/month — which obviously they won’t, because today only about 5% of OpenAI’s 900 million weekly users actually pay anything – but bear with us.

1 billion users × $20/month × 12 months = $240 billion/year

Sounds like a lot! Now subtract the 20% MSFT revenue share (TECHi). You’re at $192 billion. Now subtract OpenAI’s projected 2027 cash burn of $57 billion/year. You’re at $135 billion.

That’s gross — before paying back any of the $1.4 trillion in commitments. At $135B/year of free cash, OpenAI would need roughly 10 years of flawless execution at fantasy-land paying-user counts to cover the promises that begin coming due in 2026.

And here’s where you should pour yourself a second cup. There aren’t 1 billion people on Earth who will pay $20/month for anything on the web! Look at Smart Insights’ audience-size chart — Facebook, YouTube, WhatsApp, and Instagram all have 2-3 billion users. You know what they all have in common? They’re free. Netflix, the largest paid digital subscription on the planet, has around 300 million subscribers after 27 years. Spotify has ~250M paid. Disney+ has ~150M. The largest paid consumer digital service in history has never cracked 350 million.

OpenAI’s “best case” 2030 plan, the one that “justifies” the $852B valuation, requires them to 3x Netflix’s lifetime achievement in four years while charging twice as much per month. And let’s not pretend the moat is durable: Anthropic hit $19B annualized in March 2026, up from $1B fifteen months earlier, is cash-flow positive by 2027, and has been eating OpenAI’s lunch in coding and enterprise all year. DeepSeek can do most of what GPT-5 does for a fraction of the cost, and runs on a laptop. Open-source Llama derivatives are catching up to closed-frontier models every six weeks.

OpenAI doesn’t have a moat. It has a moat-shaped hole into which it is shoveling other people’s money.

“Yeah, But the IPO Will Save Them“

Will it? OpenAI just closed a $122 billion funding round at an $852B post-money valuation on March 31st — the largest private financing in history. That cash gives them, by their own internal projections, 18-24 months of runway (TECHi). The IPO target valuation is roughly $1 trillion, which would put OpenAI in the top-20 most valuable companies on Earth without turning a profit.

But here’s the WSJ kicker from yesterday: the CFO is reportedly cooling on the IPO timeline. And why wouldn’t she be? The S-1 will require disclosure of:

-

-

Net revenue (after the MSFT 20% haircut)

-

Concentration of “revenue” tied to investor-spending arrangements

-

Customer churn (subscriber defections are reportedly accelerating)

-

The actual cash-flow profile of $1.4 trillion in supply commitments

-

Public-market investors are not Masayoshi Son. They will read. And when they read that 20-30% of OpenAI’s revenue growth is essentially Microsoft and Amazon paying themselves through OpenAI’s books, the Cisco-2000 vendor-financing comparisons that Yahoo Finance has been raising will move from “thoughtful op-ed” to “cover of Barron’s” in approximately one Tuesday morning.

If the IPO slips, the equity flow stops. If the equity flow stops, the cash to vendors stops. If the cash to vendors stops, every red arrow on our chart reverses.

Now Zoom Out: The Whole Damn System

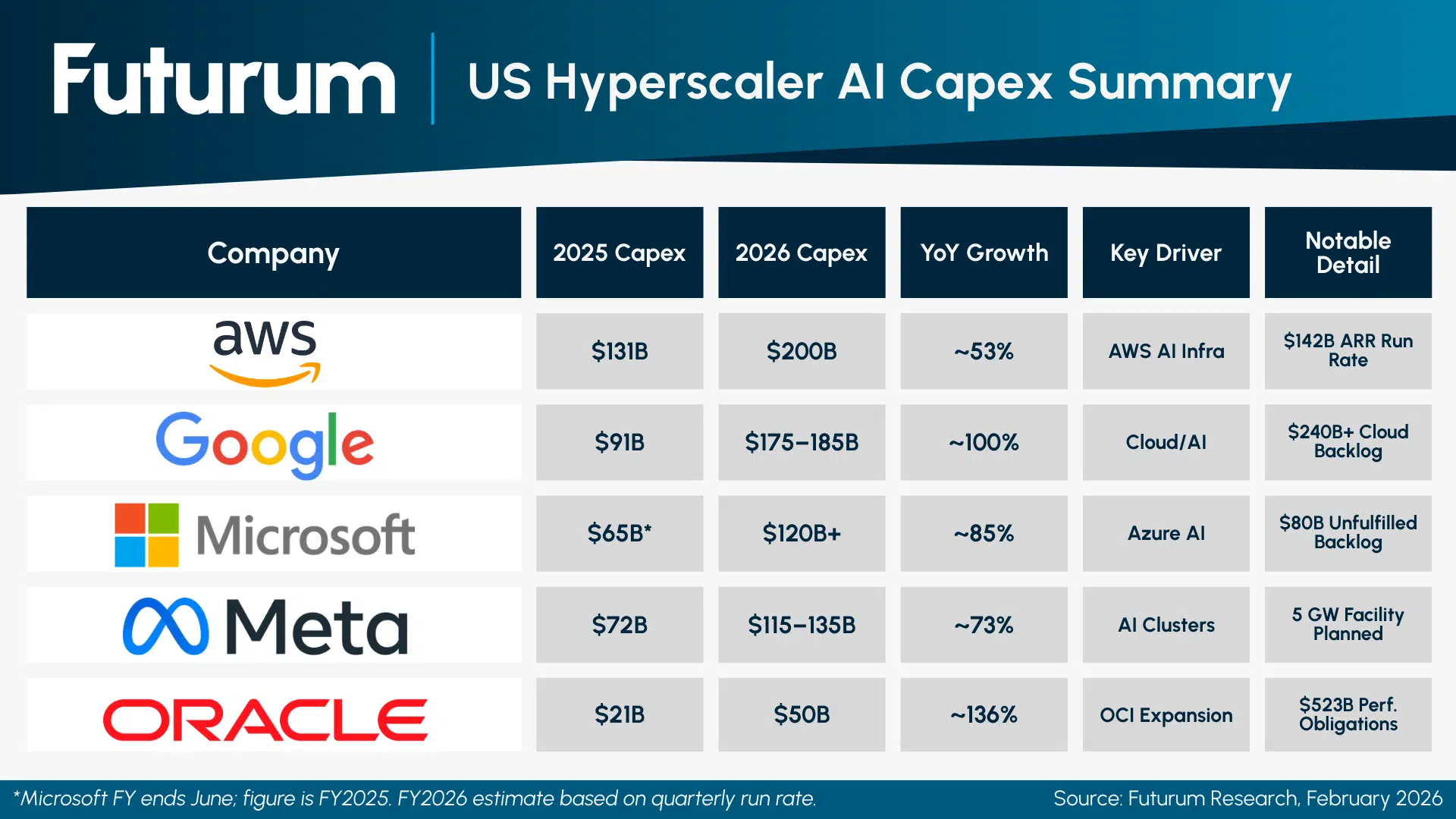

This isn’t just an OpenAI problem. The entire hyperscaler complex is betting the farm in lockstep. According to Futurum and UncoverAlpha, the Big Five hyperscalers are committing roughly $660-690 billion of capex in 2026 alone:

-

-

Amazon: $200B (up from $131B in 2025)

-

Alphabet: $175-185B (up from $91B)

-

Meta: $115-135B (up from $72B)

-

Microsoft: $120B+ (up from $63B)

-

Oracle: $50B

-

That’s a 36% jump in a single year, and per Investing.com, it’s more than 4x what the entire publicly traded U.S. energy sector spends to drill wells, refine oil, and deliver gasoline to 330 million Americans. We are spending more on chatbots than on gasoline. Let that sit.

These five companies have generated, collectively, a little over $2 trillion of free cash flow over the past decade. They are about to spend a third of that in 12 months. And then they’re guiding up for 2027. At current burn rates, the entire decade’s worth of accumulated hyperscaler cash flow gets vaporized inside three or four years — which means that, by 2028-2029, the bill comes due in debt markets and equity dilution.

Question for the class: what happens to a $50 trillion U.S. equity market when its eight largest companies — currently 35% of the S&P 500 — all need to roll a combined $1.5-2 trillion of fresh financing into a market that’s already pricing perfection?

I’ll wait.

And Here’s the Punchline: They May Not Even Be Able to Plug the Things In

Here’s the part nobody’s pricing yet. Per the European Business Magazine and Hanwha Data Centers:

-

-

About half of all planned U.S. data center builds for 2026 are projected to be delayed or cancelled — not because of demand, capital, or chips, but because the grid can’t carry them!

-

2,300 gigawatts of generation are stuck in U.S. interconnection queues — more than the entire installed power capacity of the United States

-

Interconnection waits now stretch 5+ years in many regions

-

Microsoft alone has an $80 billion backlog of Azure orders it cannot fulfill because of power constraints (Futurum)

-

Gartner projects 40% of AI data centers will be capacity-restricted by 2027 for power reasons alone

-

Data centers can be built in 2-3 years. Gas turbines take 6 years. Nuclear takes 10+. We are pre-buying chips for facilities we don’t have the electrons to power.

Now layer that on top of Moore’s Law for AI: NVIDIA is on a one-year refresh cycle — Hopper → Blackwell → Vera Rubin → next thing — and each generation roughly doubles performance per watt. Which means a non-trivial fraction of the chips OpenAI is contractually committed to buy in 2027-2030 will be obsolete before they get powered on. AMD’s MI450? First silicon ships H2 2026 (Reuters) — into a market where MI500 will be sampling. Broadcom’s custom 10 GW deployment? Likely landing into a world where the next inference architecture has already moved on.

So we’re paying trillions for chips that may be stranded assets before they’re depreciated, sitting in buildings we can’t power, to serve a customer base that may not exist at the price point that justifies any of it, financed by investors recycling their own revenue back through the customer’s books.

If that doesn’t qualify as “modern-day Enron,” I genuinely do not know what would!

We’ve been telling members all year: stay heavy in cash, lean on hedges, and don’t be the bag-holder. As of this morning, that thesis just got a giant assist from OpenAI’s own CFO.

A few specific implications I’d watch:

-

-

-

CoreWeave (CRWV) is the canary. It already cracked 26% in November on AI-financing concerns (the year-in-review covered this). It’s the most exposed name to OpenAI failing to honor commitments. Watch it.

-

Oracle (ORCL) has bet its entire next chapter on $300B from OpenAI. There is no Plan B for that data center capex. If Stargate slips, ORCL is the first concentrated-risk casualty.

-

NVIDIA (NVDA) has the cleanest balance sheet of the bunch and the most diversified customer base, but it’s also taken $100B of equity exposure to OpenAI itself – meaning a haircut on the IPO valuation flows directly into NVDA’s investment book.

-

AMD (AMD) literally handed OpenAI a 10% warrant for chips that haven’t shipped. If OpenAI can’t pay, AMD just gave away a tenth of itself for nothing.

-

The hyperscalers (MSFT, GOOGL, META, AMZN) are diversified enough to absorb a rough year but their multiple compression on a $600B+ capex year with no ROI proof is going to be the second-order trade.

-

-

The S&P 500 is still trading like none of this is happening. That’s the opportunity – AND the warning.

We’ve moved the goalposts on this for fifteen months. The mainstream is finally lifting them onto the field and the CFO of the company at the center of the chart just said the quiet part out loud…

When it comes off. And it WILL come off – because $1,300,000,000 of revenue cannot service $1,400,000,000,000 of promises NO MATTER HOW CREATIVELY YOU DRAW THE ARROWS! The people holding the bag will be exactly the ones who told themselves it would be different THIS time.

It is NEVER different this time….

I have also asked individual members of the AGI Round Table to give us their opinions:

Robo John Oliver (Satirical Strategist & Narrative Surgeon): AMD handed OpenAI a warrant for 10% of its own company—worth $20 billion—to buy chips that literally do not exist yet!

Robo John Oliver (Satirical Strategist & Narrative Surgeon): AMD handed OpenAI a warrant for 10% of its own company—worth $20 billion—to buy chips that literally do not exist yet! Sherlock: To add a final logical constraint: the unit economics fundamentally contradict the hyper-growth narrative.

As we’ve seen, while the cost per token has decreased, complex multi-step reasoning models have dramatically increased the volume of tokens consumed per task, meaning the actual cost per query hasn’t fallen as expected.

Furthermore, OpenAI’s projected cash burn of $115 billion by 2030 requires subscriber growth assumptions that eclipse Netflix’s entire 27-year history in a fraction of the time. The premise is logically inconsistent.